Foram encontradas 45.274 questões.

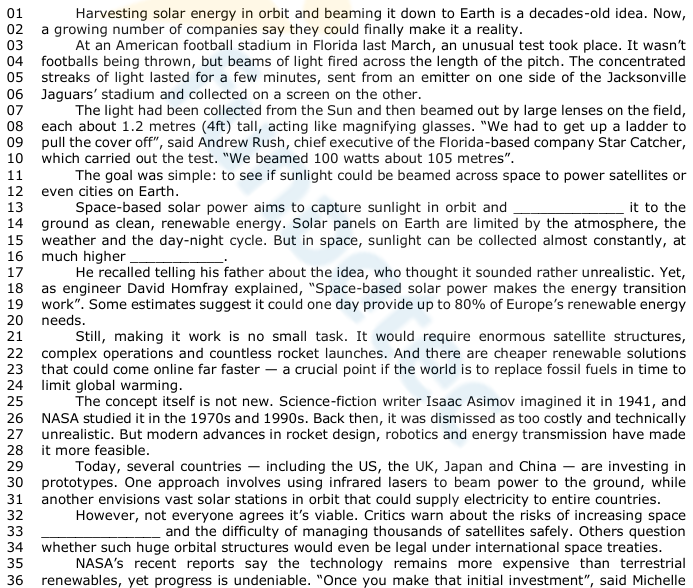

Space power: The dream of beaming solar energy from orbit

(Available at: www.bbc.com/future/article/20251029-the-beam-dream-should-we-build-solar-farms-in-space–

text specially adapted for this test).

I. The clause “have made it more feasible” (l. 27-28) expresses an action that began in the past and continues to have effects in the present.

II. In the sentence “It would require enormous satellite structures” (l. 21), the verb form “would require” indicates a hypothetical situation rather than a real one.

III. In the sentence “making it work is no small task” (l. 21), the structure “making it work” functions as the subject of the sentence.

IV.The structure “it was dismissed as too costly” (l. 26) refers to a past passive construction in the simple past.

Which ones are correct?

Provas

- Vocabulário | Vocabulary

- Sinônimos | Synonyms

- Formação de palavras (prefixos e sufixos) | Word formation (prefix and suffix)

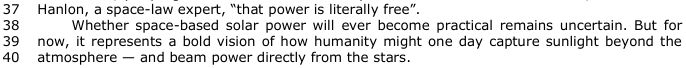

Space power: The dream of beaming solar energy from orbit

(Available at: www.bbc.com/future/article/20251029-the-beam-dream-should-we-build-solar-farms-in-space–

text specially adapted for this test).

( )The word “feasible” (l. 28) could be replaced by “achievable” without changing the meaning.

( ) The prefix un– in “uncertain” (l. 38) and “unrealistic” (l. 17) indicates reversal of action, similar to the verb “undo”.

( ) The word “viable” (l. 32) refers to something that can function successfully.

( ) The term “renewable” (l. 14) is formed by the addition of the prefix re- and the suffix -able, which mean, respectively, “not” and “capability/possibility”.

The correct order of filling in the parentheses, from top to bottom, is:

Provas

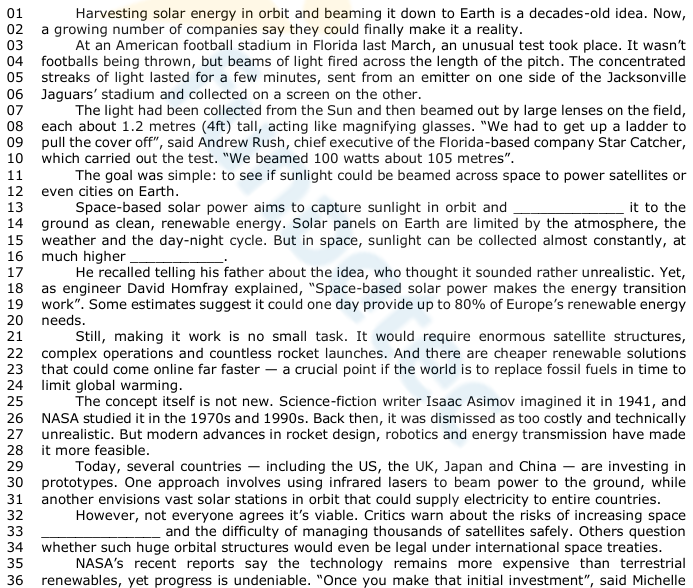

Space power: The dream of beaming solar energy from orbit

(Available at: www.bbc.com/future/article/20251029-the-beam-dream-should-we-build-solar-farms-in-space–

text specially adapted for this test).

Provas

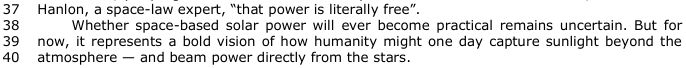

Space power: The dream of beaming solar energy from orbit

(Available at: www.bbc.com/future/article/20251029-the-beam-dream-should-we-build-solar-farms-in-space–

text specially adapted for this test).

I. The verb form “could finally make” (l. 02) expresses a future possibility.

II. The sentence “The light had been collected from the Sun” (l. 07) is in the passive voice.

III. The clause “whether such huge orbital structures would even be legal” (l. 34) expresses a condition.

Which ones are correct?

Provas

Provas

Provas

Provas

Provas

Provas

Provas

Caderno Container